The more I use AWS, the more I think that this is how everyone should be running their IT environment. So far, so good.

Everything that I’ve wanted from my environment, AWS has been able to provide. I’ve not had problems with anything of significance, it’s all been working well. Quite the opposite of recent experience I’ve had to some engineered solutions from a certain vendor. Although I must admit I cannot believe that you can buy an engineered solution from a vendor, then need to pay for over 35 days of consulting to put a single database on it. That it cannot be fixed if the client decides to install the machine themselves. I’m struggling with the concept of appliance to be honest –but that is a blog for another time.

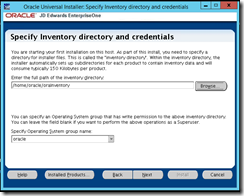

I’ve just been unit testing a JD Edwards installation on AWS, I chose RDS and EE for a reason, I wanted high availability and DR. I didn’t really need a lot of the other functionality (although I do like performance and statistics).

I’ve done interactive load testing, I’ve done batch load testing, I know exactly how things perform on this machine with EE – but the client gets free standard edition with JD Edwards, so how about I test this.

I can still do a multiple zone standard edition implementation through the AWS wizards! So, how cool it this? I get my high availability and DR with BYOL for database on AWS. Could this be serious? I know I’m really going to have to look into this carefully.

So you can see from the above

I’m creating a new database from a snapshot that I took 30 minutes ago, wow, that is cool.

I can also change the instance engine to be standard edition.

So I’ve created a standard edition database from a EE snapshot

All I need to do is change tnsnames.ora to point to the new standard edition database, and then run all of my performance tests again. The small lesson here is to not create a DB and then think that you can restore a snapshot into it, create the DB from the snapshot.

Remember that you cannot just terminate a database instance (well you can), but you cannot take it down and then bring it back up when you want. You need to delete the instance (take a final snapshot) and then use that snapshot for a restore if you need to. This is awesome for any load testing that you might want to perform, as you can take it back to before the load testing was run.

To be honest, this might have allowed me to put AWS DBA on my resume. I can create and restore a highly available database with ease. This also makes me think that I could re-architect my designs going forward. I could easily have a prod instance (which contains ORADTA and ORACTL). I could have a shared instance for ALL other owners (DD, OL, PY, PD, SY etc) and then instances for each additional environment. Then, when I needed to do a refresh, all I’d have to do was create a snapshot of prod, and then restore this to the same name as my target – CLIENT_CRP for example. I’d then have a COMPLETE data refresh in a very small amount of time. A small outage when I deleted their existing instance and created the new one.

Creating the standard edition database was sooo simple, thanks AWS.

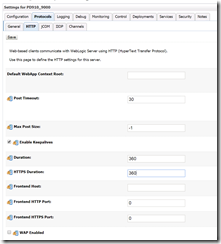

I updated the omdatb fields in sy910.f98611 and svm910.f98611 – easy. (in my new standard edition database) I created a new tns entry on all of my machines with the new DB server name. Note that in AWS your servername is going to be huge, the JDE.INI does not care for that long name – it only uses tnsnames.ora and the DB alias, so that is easy. You don’t really need to fill out the hostname in [DB SYSTEM SETTINGS] – but what you put there get’s written to the log files – haha!

I then changed the system tnsnames entry in JAS.INI and JDE.INI to point to the new tns entry. restarted JDE – and now all my connections are going to the new database.

So I essentially downgraded my EE database for free oracle licences in about 1 hour (note with oracle technology foundation). Completely!

The load test against my standard edition database is going fine too, so it looks like this might be the way forward for this implementation.

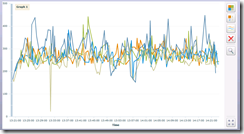

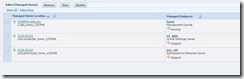

Above is runtime, each colour is a different script. I’ve not got a single error and I have 50 users pounding the system with 0 delay.

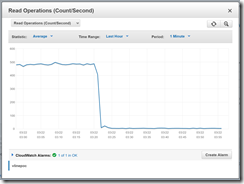

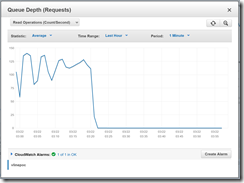

Database is busy, but not exceptionally so.

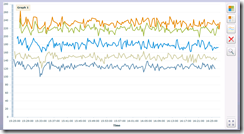

You can also see from the above that my machines are busy, but this test is relentless… I’ve also got the JDE job scheduler going full speed too, so there is no rest for the wicked. It’s really good to see that the machines are getting used, nothing worse that bad performance and 0% CPU all over the place. I have good performance and CPU getting used. I could not ask for more.